Efficiency of Kolmogorov–Arnold Networks in Small Medical Samples (Case Study of 2D Brain MRI Image Segmentation)

The aim of the study was to evaluate the efficiency of the KANU-Net 2D architecture based on U-Net in medical segmentation tasks of 2D brain MRI images on BraTS dataset with a limited number of training samples.

Materials and Methods. The experiments were carried out using the subsamples containing 50, 100, and 150 images. The study described the data preprocessing steps, including normalization, gamma correction, cropping, and augmentation. A combination of Dice loss and BCE loss was used as a loss function. The network was optimized using AdamW. The network operation performance was evaluated using Accuracy and Dice coefficient for each region and its mean Dice.

Results. KANU-Net 2D was experimentally demonstrated to achieve competitive performance comparable to current SOTA models of convolutional neural networks when trained on small samples. Specifically, the mean Dice coefficient reached 0.851 when using 100 training samples.

Conclusion. The conducted studies showed KANU-Net 2D network to outperform the Med-DANet segmentation model both in terms of a mean value and region classes. The model effectiveness for different tumor regions highlighted the ability of the KAN-based (Kolmogorov–Arnold network) approach to adapt to various image characteristics in medical segmentation tasks. The obtained results demonstrated the undeniable promise of applying KAN for medical image segmentation in small samples and can lay the foundation for further research in this field.

Introduction

Tumor segmentation in brain MRI images is a daunting challenge for digital medical data processing. Early detection and diagnosis play a critical role in effective treatment. According to WHO, in 2020, nearly 10 million people died from cancer worldwide [1]. In contrast to screening X-ray of the lungs [2], according to the study [3], there are no such examinations for brain, and cancer markers detected in patients fail to localize tumors in routine examinations [3]. Images taken using different MRI modalities have different settings of the parameters: brightness, gamma, etc. [4], and current computer image processing algorithms cannot effectively adapt to any MRI examinations.

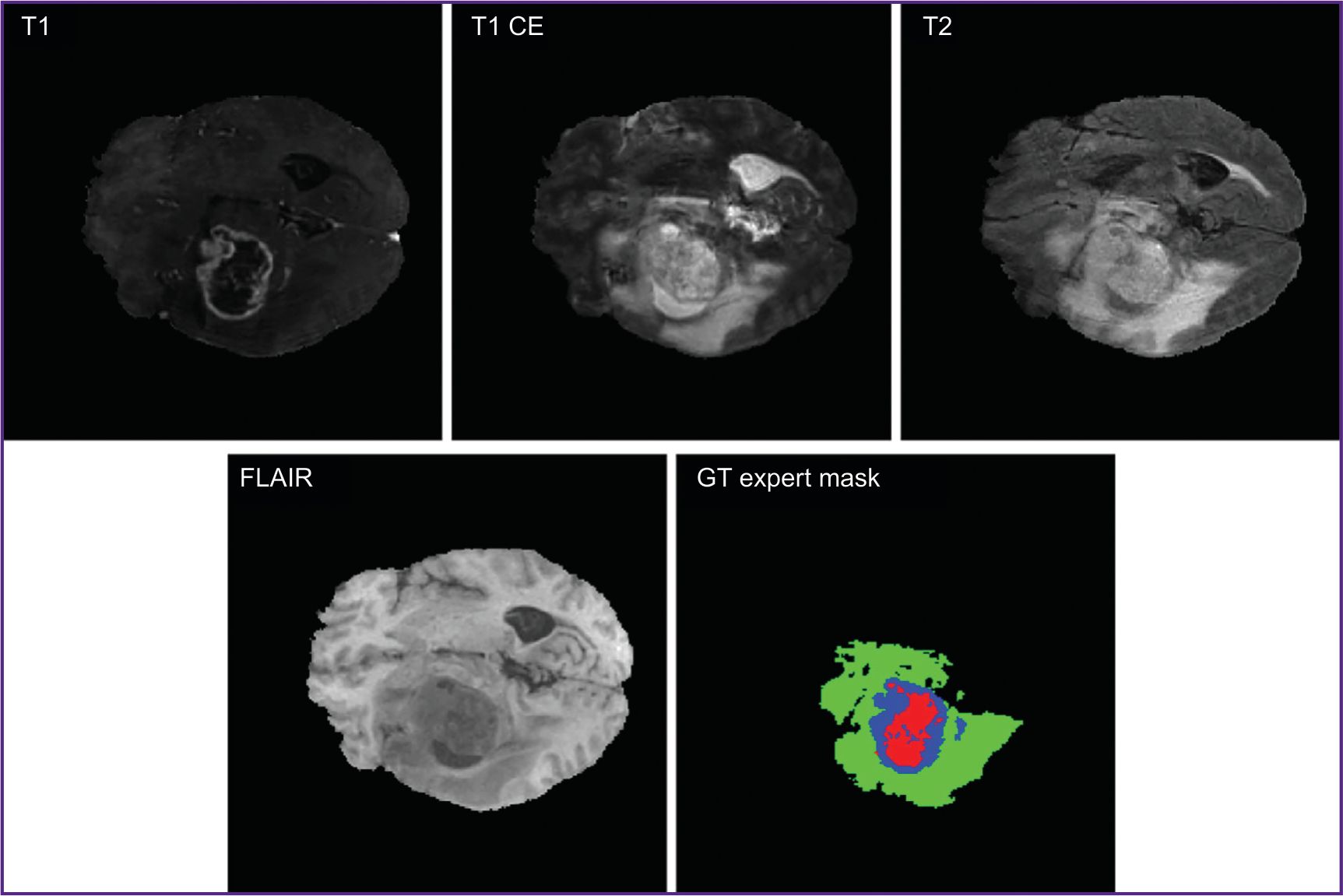

Current segmentation techniques of medical images include threshold segmentation, region split and merge method, morphological segmentation, clustering methods, region growing technique, etc. [5, 6]. However, not infrequently, obtained MR images are analyzed by doctors in a manual way, which is a resource-consuming and complex process [7]. Figure 1 demonstrates MRI images in different modalities (T1, T1 CE, T2, FLAIR [3]) and GT expert mask indicated by medical experts.

Currently, the algorithms based on ultraprecise neural networks appear to be advancing techniques for medical image segmentation [8]. U-Net can serve an example of such algorithm [9], it has been proved as one of the best architectures to work with medical images. Many other modern techniques were developed based on this network.

U-Net includes two main parts: an encoder and a decoder. The first is responsible for image convolving into a minor pixel form, while the latter, on the contrary, increases the resulting image resolution. These two parts are interconnected by convolutional layers, which realize the algorithms of extracting key signs at different abstraction levels. Each encoder layer is responsible for searching certain pixels in an image and for generating the sign map; and the decoder layer provides the discretization increase of the resulting sign map. The next stage involves such operations as batch normalization and a nonlinear function of ReLU activation followed by one more convolution and maxpooling, 2×2 in size, which decreases spatial dimensions of the obtained feature matrix, thereby crunching the information for the following passage to the network. In the network output we can get the probability of the image belonging to one of the predetermined classes.

The analysis of works with the models based on U-Net 2D architecture [10–12] showed the high values of Dice coefficient (the main metric of the network quality) to be achieved at large training sample volumes — 369 images and more. Since modern models of neural networks require giant datasets for training, there is a problem in making algorithms operating on small samples with the same efficiency [13].

Small samples appear by the reason that in medicine there are rare diseases, and due to this fact, there are small amounts of observations. As a result, we cannot apply serious projects based on the models of convolutional neural networks for real-world practical problems [14]. In neuro-oncology the problem of small samples is particularly topical for rare brain tumors or the tumors of uncommon location. The development of means capable to effectively operate under the conditions of low quantity data will enable to accelerate the implementation of clinical decision support systems into routine clinical practice.

Neural networks based on Kolmogorov–Arnold theorem (KAN) [15] are promising considering their applicability on small samples. Among their advantages reported in literature [16] there are the following ones: resilience when training using small data, although for medical data the approach is not adequately studied. The low learning rate is mentioned as one of the disadvantages. The reviews of other KAN characteristics are represented in literature sources [15, 16].

At present, the current studies on KAN in medicine are underreported, despite the fact that KAN has proved to be promising in small medical samples. Additionally, the existing studies primarily have focused on classification and simple pictures with high contrast distinguishability, such as MedMNIST (large-scale collection of standardized medical images) and chest X-ray with clearly visible features [17, 18] that prevents from covering the whole range of tasks based on medical image processing.

The aim of the study was to evaluate the efficiency of the KANU-Net 2D architecture based on U-Net in medical segmentation tasks of 2D brain MRI images on BraTS dataset with a limited number of training samples.

Materials and Methods

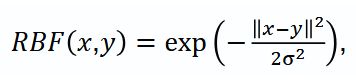

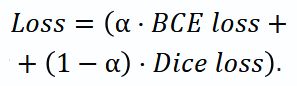

The network architecture. The study used KANU-Net 2D network [19] based on a classic U-Net with encoder-decoder structure and residual connections. In this architecture the standard Сonv2D convolutional layers were replaced with FastKANConvLayers. Each FastKANConvLayer included the conversion of input signs using radial basis function (RBF):

where x — input image vector, y — function center, σ — kernel width.

The network involved an encoder — a series of DoubleConv (Figure 2) (two successive layers FastKANConvLayers followed by batch normalization and ReLU activation) and a decoder.

|

Figure 2. DoubleConv with built-in layers FastKANConvLayer |

The network processed 2D sections with 4 input channels, which corresponded to four modalities, and provided a four-channel segmentation mask representing the probability distribution of anatomical structures.

Dataset. A benchmark dataset BraTS 2020 was used as batch data [20], from which randomly there were extracted 3 training subsamples, their volume being 50, 100, 150 images. A four-channel image from the dataset consisted of several modalities: T1 — weighted image; T1 CE — contrast (gadolinium) enhanced weighted image; T2 — weighted image; FLAIR — fluid attenuated inversion recovery.

Moreover, each image had annotations indicating tissue type verified by 4 clinical experts: healthy tissue; peritumoral region/edema region–infiltration; non-contrast core; necrotic core; enhancing tumor region.

The experiments involved 2D sections extracted from 3D data with the focus on axial sections most accurately presenting tumor regions. The present segmentation enables to distinguish key regions of interest according to BraTS standards by three classes: whole tumor (WT); tumor core (TC); enhancing tumor (ET).

Data preprocessing. A preprocessing technique used in the present study involved several stages to improve the quality and homogeneity of input data:

1) each MRI modality was independently normalized in the range [–1.0; 1.0] using min–max scaling to standardize the distribution of intensities to different scans;

2) gamma-correction, gamma values being 1.0, 1.5, 1.5, 1.2 for T1, T1 CE, T2 и FLAIR sections, respectively;

3) the images were cropped to a fixed size 224×224 pixels, and centered to the brain regions.

When forming a training dataset, we used data augmentation, which included occasional 90° rotations and occasional shifts to improve the model generalization capabilities.

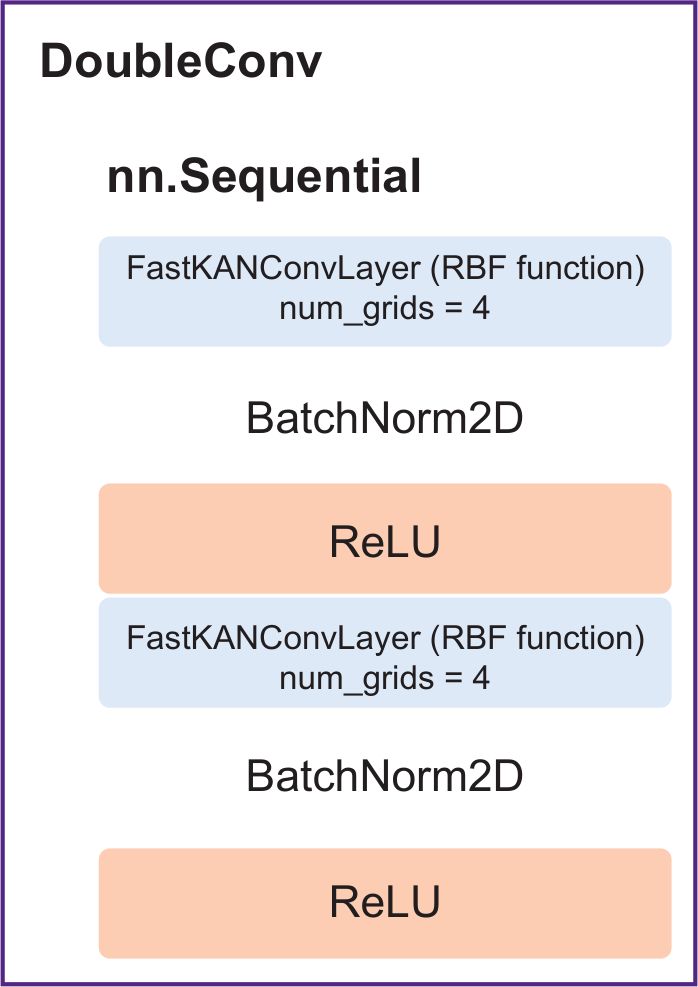

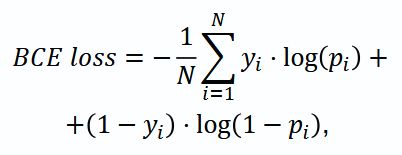

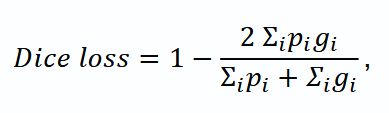

Loss function and optimization. As a loss function (Loss) we chose the combination based on Dice loss and binary-cross entropy (BCE loss), which considered significant imbalance of classes in segmentation:

The value ɑ=0.2 was found empirically to provide the training stability and optimal quality of the resulting segmentation.

where N — the number of pixels in an image; yi — true mark (0 or 1) of i pixel; pi — the probability of i pixel belonging to class 1 predicted by the model; log — natural logarithm.

where pi — a predicted value for i pixel; gi — true mark (0 or 1).

As an optimizer, we used AdamW with the initial learning lr=1e–4 and weight decay 1e–6. The scheduler chose “cosine annealing” with restarts and minimal learning rate 1e–3.

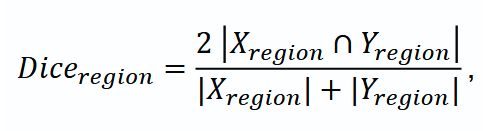

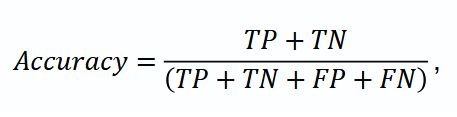

Evaluation metrics. The study carried out a complex assessment including Accuracy metrics, mean coefficient Dice and Dice coefficient for regions (Diceregion): WT tissues, TC and ET. Mean Dice calculated as the mean value for Dice coefficients for ET, TC and WT served the main metric to choose the best model.

where TP (true positives) — the number of true positive results; TN (true negatives) — the number of true negative results; FP (false positives) — the number of false positive results; FN (false negatives) — the number of false negative results.

Experiments. The experimental procedure consisted in KAN network training independently on three subsamples with different volumes and comparing the findings with the existing SOTA models of convolutional neural networks.

The experiments were carried out using the following configuration:

frameworks: pytorch, monai [21];

the sizes of training samples were 50, 100, 150 images;

the sizes of validation and test samples were 50 images;

batch size — 2;

4 grid points in KAN (num grids);

[−1.0; 1.0] the range of KAN network grids (grid min, grid max);

number of epochs — 100.

Results

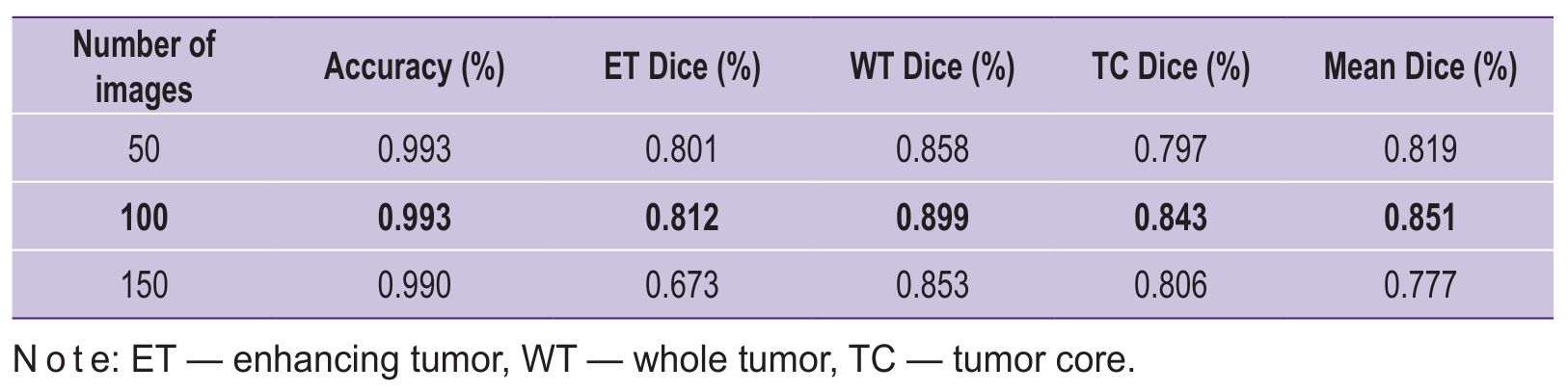

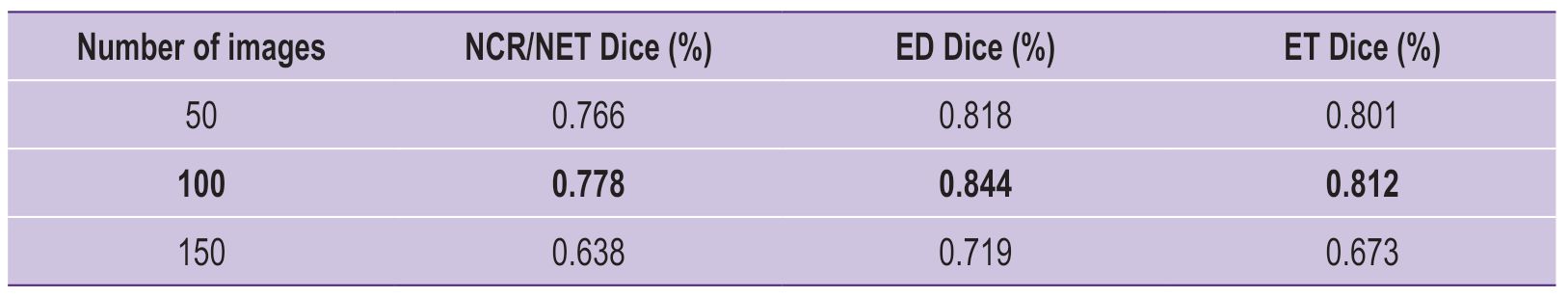

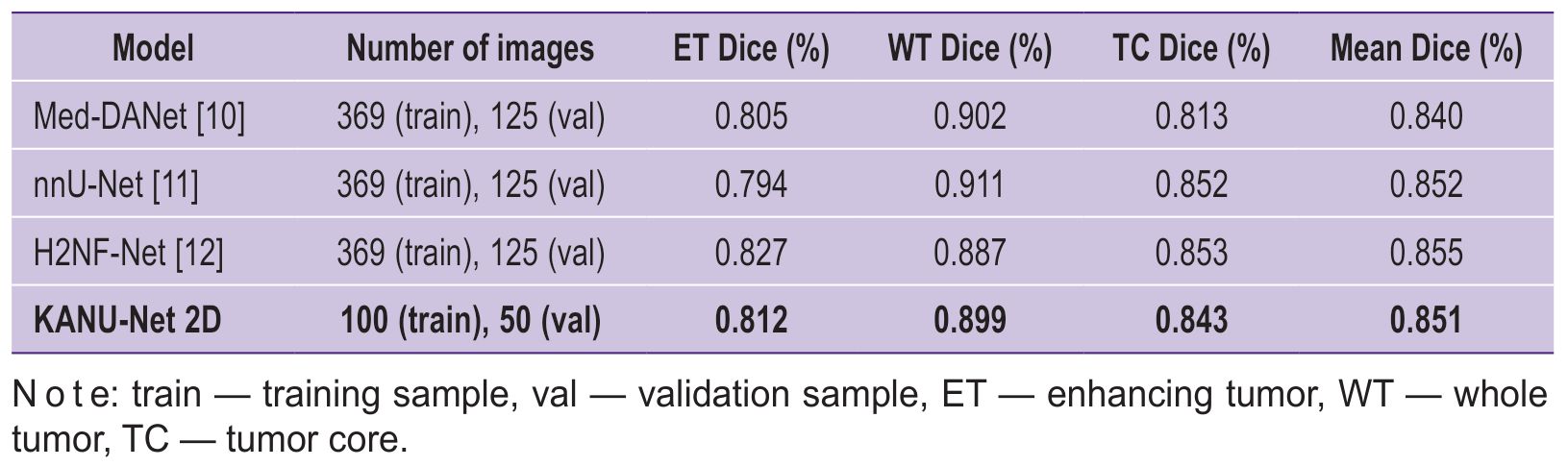

Tables 1 and 2 represent the main performance metrics of KANU-Net 2D model on a test set BraTS 2020, focusing on specific tumor regions (ET, WT, TC). Table 3 demonstrates the comparison of the developed approach efficiency with other popular models on the same dataset, but with a larger training sample.

|

Table 1. Comparison of KANU-Net 2D model performance for different sample sizes |

|

Table 2. Comparison of KANU-Net 2D model performance for the classes: necrotic and non-necrotic tumor core (NCR/NET), peritumoral edema (ED), and enhanced tumor (ET) |

|

Table 3. KANU-Net 2D and SOTA models compared |

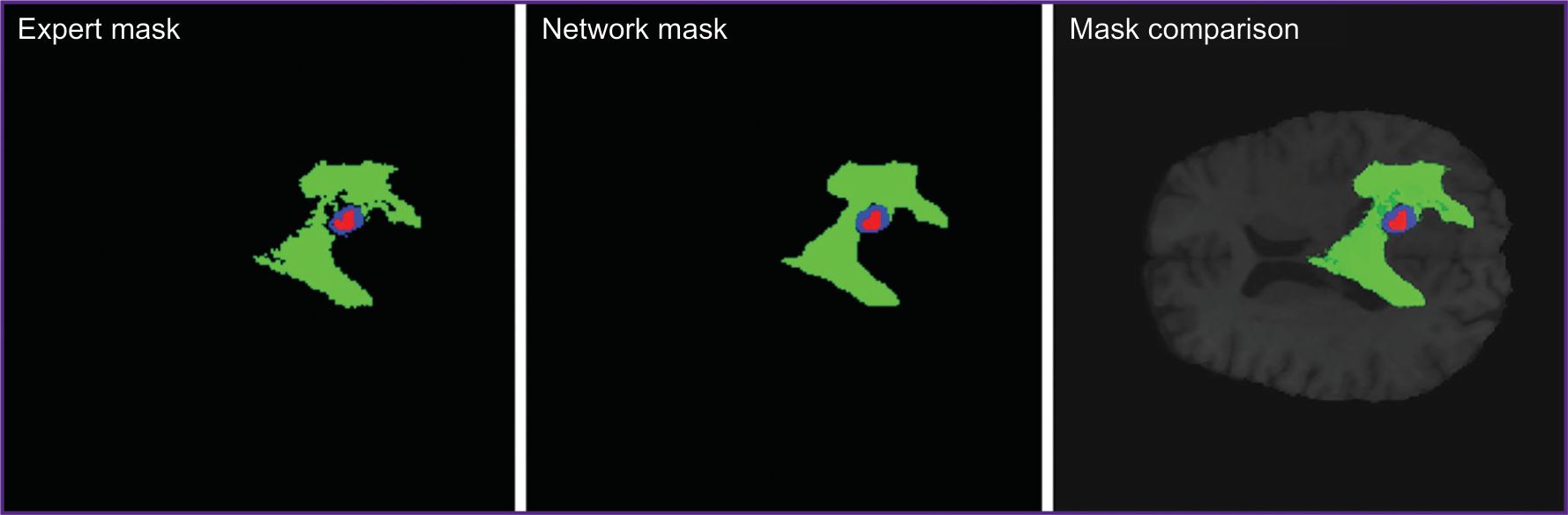

The experiments showed KANU-Net 2D model trained on small samples to achieve the mean Dice coefficient equal to 0.851. The findings appeared to exceed the model purposely developed for medical segmentation Med-DANet [10], and comparable with current SOTA models trained on full dataset BraTS 2020. Figure 3 represents the imaging findings.

|

Figure 3. Expert segmentation mask, KANU-Net 2D network prediction mask, and their comparison in one MR image |

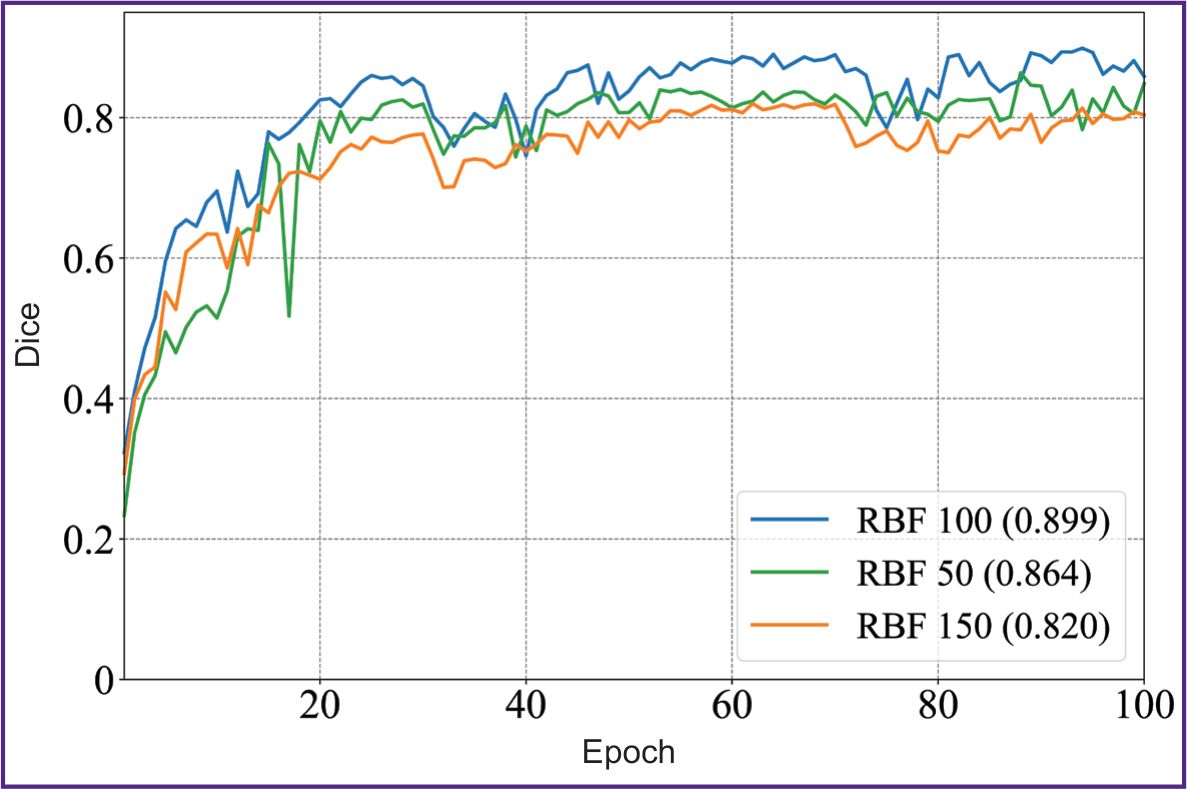

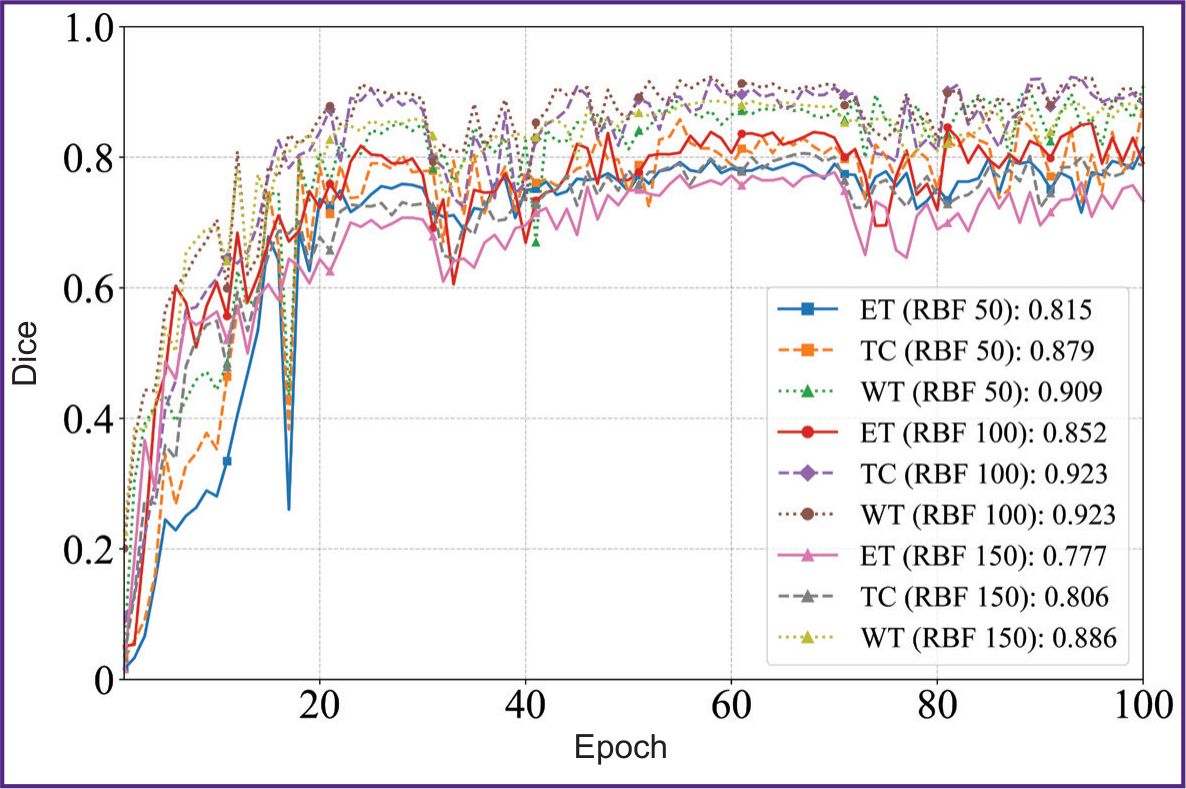

Figures 4 and 5 illustrate the dynamics of the model training on 50, 100, and 150 images of the sample within 100 epochs. The model showed the stable improvement of quality metrics on the validation sample throughout the training period, achieving the plateau of the mean Dice coefficient after the 60th epoch. The KAN-based model achieved the accuracy comparable with SOTA architectures of convolutional neural networks using smaller volumes of a sample; it suggests the KAN approach applicability to process high-tech medical images.

|

Figure 4. Diagram of the mean Dice coefficient depending on the epochs in KANU-Net 2D network (50, 100, 150 images) |

|

Figure 5. Diagrams of Dice coefficient values depending on the epochs in KANU-Net 2D network for ET, TC, WT classes (50, 100, 150 images) |

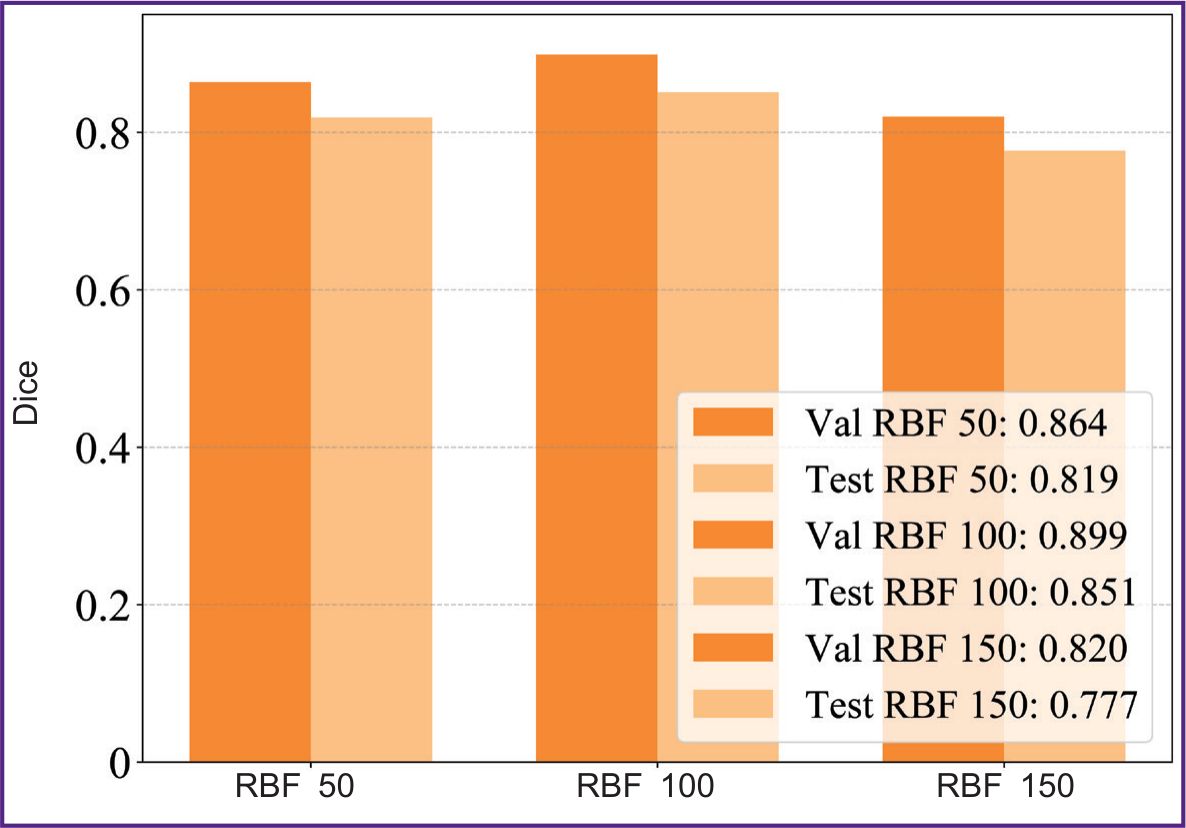

Figure 6 represents a comparative diagram of the model performance in different sample volumes on validation and test data. The results demonstrated the sample size n=100 to be the best by achieving Dice coefficient=0.851.

|

Figure 6. Graphic chart comparing Dice coefficient for validation (val) and test (test) samples of different volumes of data (50, 100, 150 images) in KANU-Net 2D network |

Discussion

In literature there are the examples of similar studies in MRI segmentation task, however, other authors have used full datasets or large samples of medical images [22], and train the network immediately on several datasets similar in their anatomical structure [23] using heavy three-dimensional images. The study [22] used similar MRI dataset BraTS-GLI 2024 applying KAN model with the modules of effective attention to channels and aggregation of pyramidal features that enabled to achieve Dice coefficient equal to 0.88–0.90 in different tumor regions. At the same time, for the network training there was used a greater number of images: 1080 for a training sample and 135 images — for validation and test samples.

The mean Dice coefficient obtained in the present study (0.851) on the sample containing only 100 images seems to be clinically significant. For a neurosurgeon, such accurate segmentation of the key tumor regions (ET, WT, TC) can be sufficient in certain patient’s management. The Dice value for WT region equal to 0.899 indicated reliable estimability of the total tumor volume. In case of a very large tumor, its eradication can be impossible and due to this it can be inappropriate. In this case, only stereotaxic biopsy is recommended. The approach will enable to improve the preoperative planning quality, especially when determining the surgery volume. In stereotaxic biopsy planning, accurate selection of a contrast accumulating tumor region (ET, Dice=0.812) is critical, since in most cases this particular region corresponds to the most malignant tumor components.

The restriction of the present study of KANU-Net 2D network is the use of only one basic function of RBF, while there is a great variety of them [24], as well as the fixed number (n=4) of grid points (num grids) in the network. These characteristics of KAN network require further research to study medical image segmentation.

The present study, to a greater extent revealed the technical details of using Kolmogorov–Arnold networks to analyze small medical samples; however, its findings have the evident clinical focus. Model training on small datasets will enable to develop specific tools for the segmentation of rare pathologies, when the collection of hundreds of examples is impossible. The implementation of the specified algorithms into clinical practice unlocks capabilities for developing specialized software designed for preoperative image analysis with marking images and tumor volume assessment. Figure 3 represents the visualization of the results, demonstrating the model to adequately cope with the required task, and its prognoses are visually close to the expert marking.

Conclusion

The research carried out showed KANU-Net 2D to have the precedence over the model for segmentation Med-DANet by both: the mean value and some classes. Moreover, the network reaches the competitive performance on dataset BraTS 2020 compared to other models, as evidenced by mean Dice=0.851 for three tumor regions versus the values 0.852 and 0.855 for nnU-Net and H2NF-Net, respectively. The model efficiency for different tumor regions makes it possible to adapt the KAN-based approach to various image characteristics in medical segmentation tasks.

In addition to technical efficiency, the demonstrated approach has significant clinical potential. Further research should be focused on the model validation under the conditions of real neurosurgical practice and the assessment of segmentation results by practicing neurosurgeons. It will enable to take a step from metrics assessment to the estimation of real benefits for surgical planning.

Study funding. The present study had no external funding.

Conflict of interest. The authors declare no conflict of interest related to the present study.

References

- World Health Organization. URL: https://www.who.int/ru/news-room/fact-sheets/detail/cancer.

- Tyurin I.E. Screening of respiratory diseases: current trends. Atmosfera. Pul'monologiya i allergologiya 2011; 2: 12–16.

- Abdulraqeb А.R.А., Sushkova L.T., Lozovskaya N.A. A review of tumor segmentation methods in brain MRI images. Prikaspiyskiy zhurnal: upravlenie i vysokie tekhnologii 2015; 1(29): 122–138.

- Sharma N., Aggarwal L.M. Automated medical image segmentation techniques. J Med Phys 2010; 35(1): 3–14, https://doi.org/10.4103/0971-6203.58777.

- Alam M.S., Rahman M.M., Hossain M.A., Islam M.K., Ahmed K.M., Ahmed K.T., Singh B.C., Miah M.S. Automatic human brain tumor detection in MRI image using template-based K means and improved fuzzy C means clustering algorithm. Big Data and Cognitive Computing 2019; 3(2): 27, https://doi.org/10.3390/bdcc3020027.

- Shahid N., Rappon T., Berta W. Applications of artificial neural networks in health care organizational decision-making: a scoping review. PLoS One 2019; 14(2): e0212356, https://doi.org/10.1371/journal.pone.0212356.

- Mikhelev V.M., Miroshnichenko A.S. The decision of the task of classification of human brain pathologies on MRI images. Nauchnyy rezul'tat. Informatsionnye tekhnologii 2019; 4(2): 43–52, https://doi.org/10.18413/2518-1092-2019-4-2-0-5.

- Zhanga Y., Liaoa Q., Dinga L., Zhanga J. Bridging 2D and 3D segmentation networks for computation efficient volumetric medical image segmentation: an empirical study of 2.5D solutions. Computerized Medical Imaging and Graphics 2022; 99: 102088, https://doi.org/10.1016/j.compmedimag.2022.102088.

- Ronneberger O., Fischer P., Brox T. U-Net: convolutional networks for biomedical image segmentation. In: Navab N., Hornegger J., Wells W., Frangi A. (editors). Medical image computing and computer-assisted intervention — MICCAI 2015. MICCAI 2015. Lecture notes in computer science, vol. 9351. Springer, Cham; 2015, https://doi.org/10.1007/978-3-319-24574-4_28.

- Wang W., Chen C., Wang J., Li J. Med-DANet: dynamic architecture network for efficient medical volumetric segmentation. In: Computer vision — ECCV 2022: 17th European conference. Tel Aviv, Israel, October 23–27, 2022. Proceedings, Part XXI. Israel; 2022; p. 506–522, https://doi.org/10.1007/978-3-031-19803-8_30.

- Isensee F., Jäger P.F., Full P.M., Vollmuth P., Maier-Hein K.H. nnU-Net for brain tumor segmentation. In: Crimi A., Bakas S. (editors). Brainlesion: glioma, multiple sclerosis, stroke and traumatic brain injuries. BrainLes 2020. Lecture notes in computer science, vol. 12659. Springer, Cham; 2021; p. 118–113, https://doi.org/10.1007/978-3-030-72087-2_11.

- Jia H., Cai W., Huang H., Xia Y. H2NF-Net for brain tumor segmentation using multimodal MR imaging: 2nd place solution to BraTS challenge 2020 segmentation task. In: Crimi A., Bakas S. (editors). Brainlesion: glioma, multiple sclerosis, stroke and traumatic brain injuries. BrainLes 2020. Lecture notes in computer science, vol. 12659. Springer, Cham; 2021, https://doi.org/10.1007/978-3-030-72087-2_6.

- Poryaeva E.P., Evstafyeva V.A. Artificial intelligence in medicine. Vestnik nauki i obrazovaniya 2019; 6-2(60): 18.

- Nosovskiy A.M., Pikhlak A.E., Logachev V.A., Chursinova I.I., Mutyeva N.A. Statistics of small samples in medical research. Meditsinskaya statistika i metodologiya 2022; 3: 45–52.

- Liu Z., Tegmark M., Ma P., Matusik W., Wang Y. Kolmogorov–Arnold networks meet science. Phys Rev X 2025; 15(4), https://doi.org/10.1103/4t7t-v19l.

- Yang Z., Zhang J., Luo X., Wu X., Lu Z., Shen L. MedKAN: an advanced Kolmogorov–Arnold network for medical image classification. In: IEEE International Conference on Bioinformatics and Biomedicine (BIBM). China; 2025; p. 3090–3097, https://doi.org/10.1109/bibm66473.2025.11356561.

- Ibrahum A.D.M., Shang Z., Hong J.E. How resilient are Kolmogorov–Arnold networks in classification tasks? A robustness investigation. Applied Sciences 2024; 14(22): 10173, https://doi.org/10.3390/app142210173.

- Li C., Liu X., Li W., Wang C., Liu H., Liu Y., Chen Z., Yuan Y. U-KAN makes strong backbone for medical image segmentation and generation. Proceedings of the AAAI Conference on Artificial Intelligence 2025; 39(5): 4652–4660, https://doi.org/10.1609/aaai.v39i5.32491.

- Jaouad T. KANU-Net: Kolmogorov–Arnold networks based U-Net architecture for images segmentation. URL: https://github.com/JaouadT/KANU_Net.

- Menze B.H., Jakab A., Bauer S., Kalpathy-Cramer J., Farahani K., Kirby J., Burren Y., Porz N., Slotboom J., Wiest R., Lanczi L., Gerstner E., Weber M.A., Arbel T., Avants B.B., Ayache N., Buendia P., Collins D.L., Cordier N., Corso J.J., Criminisi A., Das T., Delingette H., Demiralp Ç., Durst C.R., Dojat M., Doyle S., Festa J., Forbes F., Geremia E., Glocker B., Golland P., Guo X., Hamamci A., Iftekharuddin K.M., Jena R., John N.M., Konukoglu E., Lashkari D., Mariz J.A., Meier R., Pereira S., Precup D., Price S.J., Raviv T.R., Reza S.M., Ryan M., Sarikaya D., Schwartz L., Shin H.C., Shotton J., Silva C.A., Sousa N., Subbanna N.K., Szekely G., Taylor T.J., Thomas O.M., Tustison N.J., Unal G., Vasseur F., Wintermark M., Ye D.H., Zhao L., Zhao B., Zikic D., Prastawa M., Reyes M., Van Leemput K. The multimodal brain tumor image segmentation benchmark (BRATS). IEEE Trans Med Imaging 2015; 34(10): 1993–2024, https://doi.org/10.1109/TMI.2014.2377694.

- Cardoso M., Li W., Brown R., Ma N., Kerfoot E., Wang Y., Murrey B., Myronenko A., Zhao C., Yang D., Nath V., He Y., Xu Z., Hatamizadeh A., Myronenko A., Zhu W., Liu Y., Zheng M., Tang Y., Yang I., Zephyr M., Hashemian B., Alle S., Darestani M. Z., Budd C., Modat M., Vercauteren T., Wang G., Li Y., Hu Y., Fu Y., Gorman B., Johnson H., Genereaux B., Erdal B.S., Gupta V., Diaz-Pinto A., Dourson A., Maier-Hein L., Jaeger P.F., Baumgartner M., Kalpathy-Cramer J., Flores M., Kirby J., Cooper L.A.D., Roth H.R., Xu D., Bericat D., Floca R., Zhou S.K., Shuaib H., Farahani K., Maier-Hein K.H., Aylward S., Dogra P., Ourselin S., Feng A. MONAI: an open-source framework for deep learning in healthcare 2022. URL: https://arxiv.org/abs/2211.02701.

- Chen Y., Tang T., Kim T., Shu H. UKAN-EP: enhancing U-KAN with efficient attention and pyramid aggregation for 3D multi-modal MRI brain tumor segmentation. BMC Medical Imaging 2025; 25(1), https://doi.org/10.1186/s12880-025-02053-w.

- Ilerioluwakiiye A., Udo A., Ojo A., Oyetunji A., Ajigbotosho H., Iorumbur A., Raymond C., Adewole M. Domain-adaptive transformer for data-efficient glioma segmentation in Sub-Saharan MRI. 2025. URL: https://arxiv.org/abs/2511.02928.

- Seydi S.T. Exploring the potential of polynomial basis functions in Kolmogorov–Arnold networks: a comparative study of different groups of polynomials. 2024. URL: https://arxiv.org/abs/2406.02583.